Redefine What's Possible

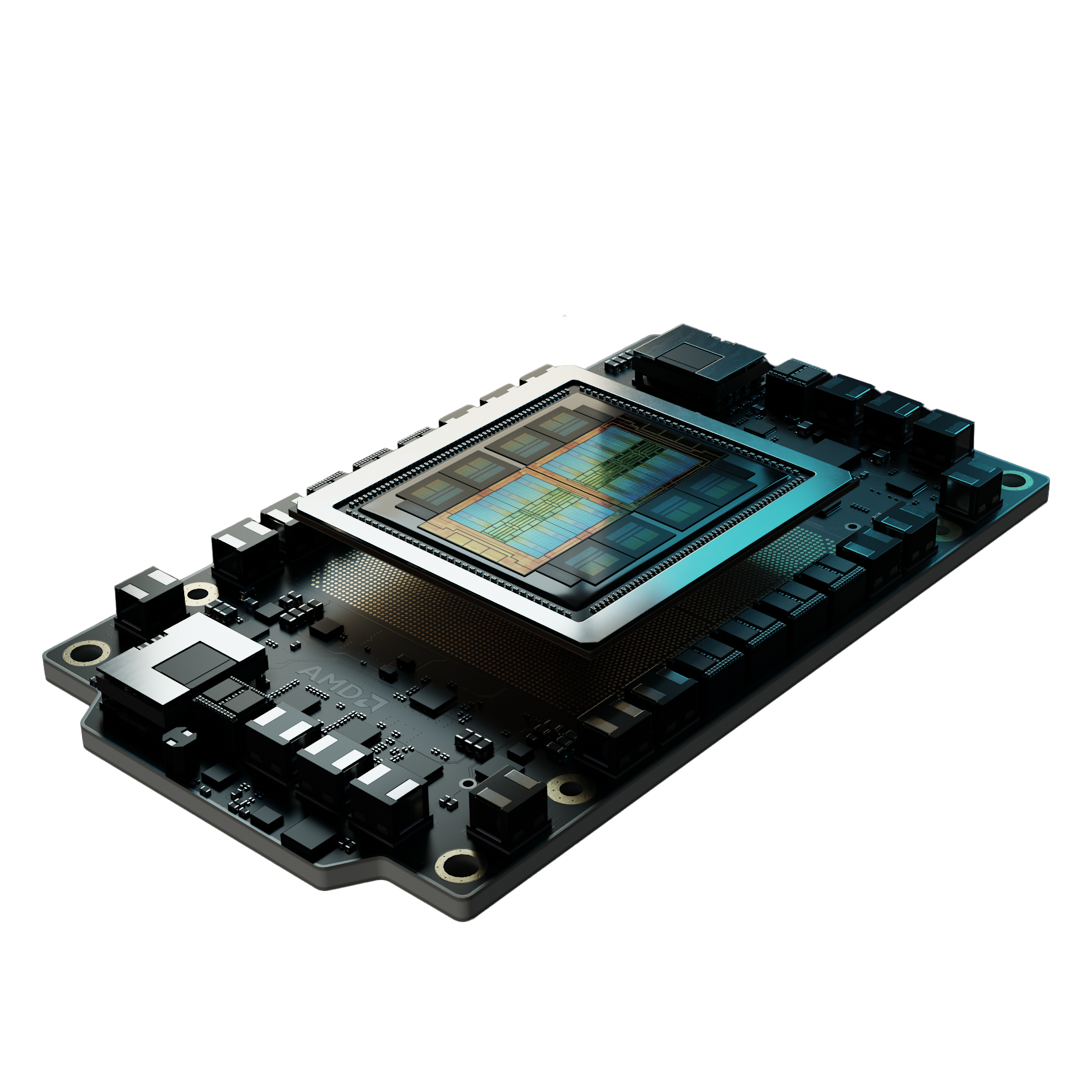

AMD Instinct™ MI300X

Breakthrough AI performance with 288GB HBM3E and up to 2.8X training speedup. Purpose-built for frontier model development and massive-scale inference workloads.

MI300x Server Configuration

Our AMD Instinct MI300X clusters deliver consistent performance and scalability.

Whether you're developing frontier LLMs or deploying latency-sensitive inference applications, TensorWave's AMD MI300X cluster offers a seamless cost effective path from prototyping to production.

Standard Node Config

Bare Metal

with optional managed Kubernetes & Slurm

Accelerators

8x AMD Instinct MI300X

CPUs

2x AMD Turin 9575F (128 cores, 256 threads)

Memory

3 TB DDR5 6000 MT/s

Local Storage

4x 3.84TB NVMe Drives+2x 893GB m.2 drives

Node Interconnects

3.2Tb/sPeak Network Storage

50PBMI300x Server Configuration

Our AMD Instinct MI300X clusters deliver consistent performance and scalability.

Standard Node Config

Bare Metal

with optional managed Kubernetes & Slurm

Accelerators

8x AMD Instinct MI300X

CPUs

2x AMD Turin 9575F (128 cores, 256 threads)

Memory

3 TB DDR5 6000 MT/s

Local Storage

4x 3.84TB NVMe Drives+2x 893GB m.2 drives

Node Interconnects

3.2Tb/sPeak Network Storage

50PBWhether you're developing frontier LLMs or deploying latency-sensitive inference applications, TensorWave's AMD MI300X cluster offers a seamless cost effective path from prototyping to production.